Introduction

Gout is an inflammatory arthritis that occurs due to the crystallisation of uric acid within joints and a consequent immune response.1 In Australia, 1.5% of the total population and up to 10% of elderly males are affected, making gout one of the most common causes of inflammatory arthritis nationally.2 The prevalence of gout globally is increasing.3,4 The consequences are not limited to joints but involve multiple organ systems, and gout is a significant cause of morbidity and premature mortality.5,6 This, combined with the fact that it is generally poorly managed2 highlights an urgent need for further epidemiological and clinical research into gout.

Epidemiological studies are dependent on medical records to gather research information. Medical records in Australia use the International Classification of Diseases (ICD) coding system to list various diagnoses on discharge (including gout), and the validity of research conducted using these records is dependent on the accuracy the ICD coding. However studies from the USA assessing accuracy of the ICD 9 coding of gout, by comparing patient’s coded as having gout with diagnostic criteria, or by a rheumatologist’s opinion based on chart review have demonstrated poor results.7,8

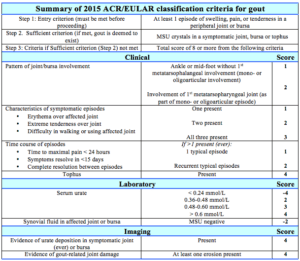

The gold standard for diagnosing gout is the demonstration of monosodium urate (MSU) crystals in the synovial aspirate. Several related criteria have been developed to improve diagnosis, the most recent being the 2015 gout classification criteria published by American College of Rheumatology and the European League Against Rheumatism (ACR/EULAR) (see Appendix).9 These criteria were intended to counter suboptimal sensitivity and specificity of the pre-existing criteria. They comprise 8 domains including clinical, laboratory and radiological domains. Demonstrating MSU crystals in a symptomatic joint or bursa is deemed to meet the “sufficient criterion” without additional scoring requirement.

The accuracy of ICD-10 coding of gout has not been examined in an Australian administrative dataset. The objective of this study was to assess the accuracy of the ICD-10 discharge diagnosis of gout in an Australian inpatient setting, with respect to evidence of gout documented during the admission. The secondary aims were to assess the accuracy of the ICD-10 discharge diagnosis of gout against the ACR/EULAR gout classification criteria (GCC) and also against an independent specialist doctor’s opinion of the accuracy of the diagnosis after case-note review.

Methods

This study to validate the diagnosis of gout was conducted at two tertiary teaching hospitals in Perth, Western Australia. The discharge database of Fiona Stanley (FSH) and Sir Charles Gairdner (SCGH) hospitals were accessed to identify all inpatients that had been discharged with an ICD-10 code for gout (M10), regardless of the ward or clinical service of the admission. The presence of the M10 coding was the inclusion criterion for the study. Patients were excluded if no medical record was available. We studied two periods to increase the reliability of the results. The period at FSH was from October 2014 to 7 July 2015, and at SCGH between July 2016 and July 2017.

The case record for each patient’s admission was reviewed by two senior doctors (one from each hospital). The inpatient record and discharge summary were assessed for documentation that the treating clinician believed that the patient had active gout during the admission. Possibilities included:

- A diagnosis of gout was stated in the notes, discharge summary or imaging reporting; or

- A joint aspirate was reported to contain MSU crystals; or

- Documentation of commencement of pharmacotherapy (colchicine, allopurinol, febuxo-stat, prednisolone, probenecid or a non-steroidal anti-inflammatory drugs (NSAID)) usually reserved for gout in a consistent clinical setting.

Clinical data including history and examination findings and investigation results (laboratory and radiological) was extracted to calculate the score as per the EULAR/ACR criteria.9 Patients that met the sufficient criterion (synovial fluid demonstrating MSU crystals) did not have a score calculated. For the remainder, the admission details were used to calculate the classification criteria score. Patients that met the minimum score of at least 8 were diagnosed as having gout.9 No score was calculated for patients with insufficient documentation. The notes were also assessed to determine whether a rheumatologist reviewed the patient.

Based on the case-note review of FSH patients only, an independent clinician (a general medicine consultant or rheumatology advanced RACP trainee) assessed whether each patient had possible or definite gout, that gout was unlikely, or that there was insufficient information to consider the diagnosis (“Independent Doctor’s opinion”).

Descriptive statistics summarised the demographic and clinical characteristics of patients (SPSS version 23. IBM, Armonk, NY, USA].

Reliability between the two assessors (one at each hospital) was determined by a review of ten files by both assessors. The percentage agreement and kappa scores were calculated for the categorical items which included whether there was document evidence of gout (yes or no) and the ACR/EULAR criteria status (meet sufficient criteria, meet classification criteria, did not meet classification criteria or unable to be scored).

Results

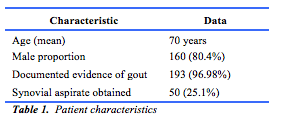

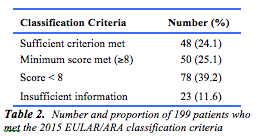

One hundred and ninety-nine (100 at FSH and 99 at SCGH) case notes were reviewed, based on the presence of the discharge M10 diagnostic code. One hundred and sixty (80.4%) were male. The mean age was 70.9 years (IQR 60-84.5 years). In 193 cases (97.0%), documented evidence of gout was identified (table 1). The remaining 6 (3%) cases were considered a coding error, with no reference to gout found in the patients inpatient file or discharge summary. Ninety-eight patients (49.2%) met the 2015 ACR/EULAR GCC. Of these, 48 (49%) met the sufficient criterion and 50 patients (51%) met the minimum score requirement. The remaining 101 patients (50.8%) either did not meet the minimum score required (78 patients, 39.2%) or there was insufficient information available in the notes (23 patients, 11.6%) to accurately calculate the score (table 2).

Of the 100 FSH patients assessed to determine whether an “Independent Doctor opinion” could confirm the diagnosis of gout, 73 (73%) were felt to have gout. Most (75%) had definite gout, and 25% had possible gout. Of the remaining 27 patients, 13 were classified as unlikely to have gout and 14 had insufficient information (Table 3).

In the total group, a rheumatology consult was requested in 44 cases (22.8%). Of these, 34 (77.27%) met the ACR/EULAR classification criteria for gout. In contrast, the 149 patients for whom no rheumatology consultation was sought, 64 (42.95%) met the ACR/EULAR classification criteria for gout (OR 5.44, p = 0.0025). In patients who had a rheumatology consultation, 22 (27%) had joint aspirates.

Agreement between reviewers with regards to documentary evidence of gout during the admission was 100%. Kappa could not be determined because all participants had documentary evidence of gout and the variable term was therefore constant. Agreement for cases meeting 2015 ACR/EULAR GCC was also excellent with kappa = 0.846. Of the 100 patients for whom the “independent doctor’s opinion” was obtained, 22 (22%) had a rheumatology consultation. Nineteen (86.3%) were felt likely to have gout, 1 each (4.5%) had possible or unlikely gout, and in 1patient there was insufficient information for a diagnosis.

Discussion

The primary aim was to determine the accuracy of the ICD-10 discharge diagnoses of gout in respect to documented evidence of gout during the admission. In a small number of patients, the ICD coding was inaccurate, and no documentary evidence was found in the notes to confirm that any clinician had diagnosed gout during the admission. This was reassuring, and suggests that in the majority of cases the ICD-10 discharge diagnosis accurately reflects the treating clinician’s diagnosis.

The second aim was to assess the accuracy of the ICD-10 discharge diagnosis of gout in an Australian inpatient setting against the validated ACR/EULAR GCC. In our case note review, just over half the patients did not meet the ACR/EULAR GCC criteria. This raises concerns over the validity of clinical diagnoses of gout, and hence the ICD coding assigned, in over one half of the cases. This is consistent with previous evidence suggesting that the ICD diagnoses of gout may not be specific for that disease. In a 2009 study, only 36% of patients with an ICD-9 diagnosis of gout fulfilled the ACR 1977 preliminary criteria.7 As in this study, incomplete documentation was a frequent impediment towards accurately assessing the final score. Thus the number of patients meeting classification criteria may be underestimated. Whilst the accuracy of coding when using the case notes as the gold standard appears to be excellent, the specificity of the diagnosis remains in question. This has significant implications for using datasets compiled for administrative purposes for research. Whilst the majority of cases coded for gout were diagnosed by the treating clinicians, the level of experience and diagnostic skill will vary. The 2015 ACR/EULAR classification criteria were developed to ensure that homogenous populations are included in clinical trials of gout. In our audit there was a sizeable discrepancy between the accuracy of the ICD discharge coding (96.98%), and the number of people meeting the ACR/EULAR GCC (49.2%). This is perhaps not surprising, given these are not intended to be diagnostic criteria. Furthermore, incomplete documentation may have skewed the results.

Our final aim was to assess the accuracy of the ICD-10 discharge diagnosis of gout against an independent doctor’s opinion. A comprehensive review of the notes by CS found that in one third of the cases the diagnosis of gout could not be confirmed. Thus, the diagnosis of gout in Western Australian administrative data sets may be incorrect in as many as one third of cases. This gives emphasis to the concerns voiced above.

In our study a review by a rheumatologist significantly increased the odds of the patient meeting the 2015 ACR/EULAR criteria. In the tertiary setting in Western Australia, most inpatients with a discharge diagnosis of gout do not receive care by rheumatologists and are therefore less likely to meet ACR/EULAR GCC.10 This also raises concerns about the specificity of ICD discharge diagnoses.

A limitation of our study was the time frame used. One of the tertiary hospitals examined in this study was newly commissioned, and had opened in a staged fashion. Both the patients included and access to rheumatology review may have been biased by this history. Our study was retrospective, and poor case note documentation impeded the ability of the independent assessor to assess whether the patient had clinical evidence of gout. This may have contributed to our results showing that a diagnosis could not be substantiated in a third of patients. Another limitation was the absence of stratification of gout as a primary or secondary diagnosis. We utilised one independent case-record reviewer at each hospital in order to increase generalisability of the results. Although agreement was excellent, this may have introduced bias.

Conclusion

Our study demonstrates that the majority of patients who received an ICD-10 discharge code for gout did have documented evidence of gout during the admission. However, over half of the patients did not meet the 2015 EULAR/ACR GCC, and nearly one third of cases could not be verified as having gout upon review by an independent rheumatologist. Therefore, the diagnosis of gout in a substantial proportion of cases assigned a diagnostic code for gout is uncertain. This may have implications for the specificity of ICD-10 discharge coding in Australian datasets, which is used for epidemiological data studies in many areas.

We found that a rheumatology consultation significantly improved the odds of meeting the 2015 EULAR/ACR GCC. Such a consultation for general medical inpatients is likely to improve the accuracy of the diagnosis and is recommended for all patients likely to have gout.

Provenance: Externally reviewed

Funding: Not required

Ethical Approval: Not required

Declarations: No conflicts of interest declared

Corresponding Author: Dr Chanakya Sharma, Rheumatology Department, Fiona Stanley Hospital, Murdoch, WA 6150, Australia. E-mail: Chanakya.Sharma@health.wa.gov.au